PDF: Anatomy of a Document Format and the Paradox it Presents for AI

- Marianne Calilhanna

- Apr 5

- 4 min read

Updated: Apr 14

In 1990, Dr. John Warnock launched his idea for The Camelot Project. The idea was to create a universal way to share documents across computers, operating systems, or networks without losing formatting. The vision was that a document could be created once, then reliably viewed, printed, and exchanged anywhere with the exact appearance preserved. The PDF, Portable Document Format, was sheer elegance in its simplicity yet beneath that simplicity lay a deeply complex codebase engineered to capture layout, typography, and graphics across any environment.

And the PDF became ubiquitous.

Today, PDFs are used everywhere. The PDF is the most widely used digital document format with more than 290 billion new PDF documents created every year (Smallpdf, 2025). Government agencies and regulated industries rely heavily on PDFs, with millions of official documents published in PDF format. Despite investing heavily in XML-early workflows over the years, scholarly publishers continue to provide research articles in PDF format. The PDF software market, currently valued at more than $2 billion, is projected to nearly triple to $5.72 billion by 2033 (Global Growth Insights, 2026). All these metrics signal that organizational dependence on the format is deepening, not diminishing.

Behind the Scenes

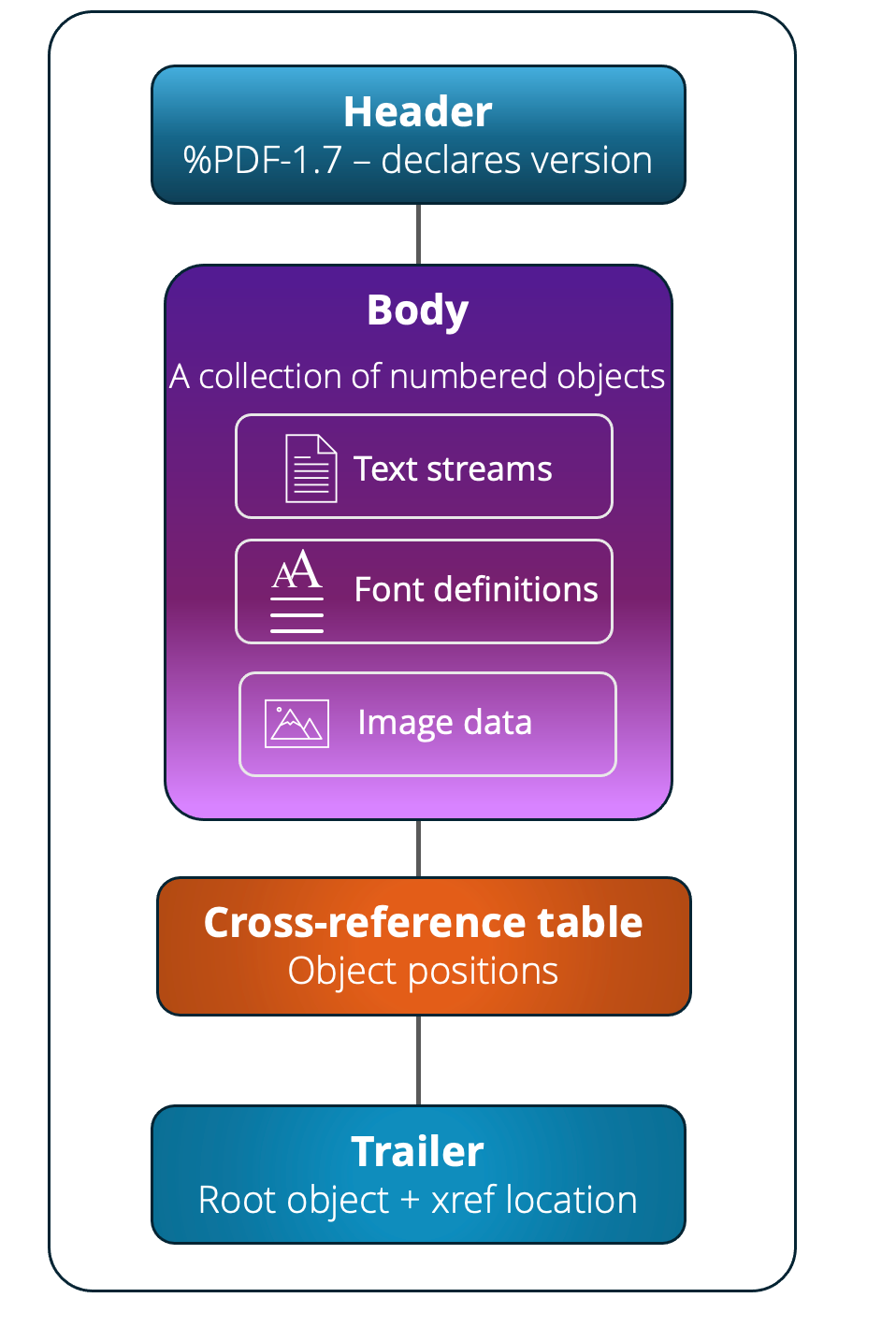

There are four main sections in a PDF file

Header: the first line of the file. It simply states "I am a PDF" and which version (e.g., %PDF-1.7).

Body: the meat of the file. It's a collection of objects, each numbered. Each object has an ID and contains one specific thing. Objects can be:

Text streams (the actual words)

Font definitions

Image data

Page structure info

Metadata (author, title, creation date)

Cross-Reference Table (xref): the file's index. This table lists every object and its exact byte position in the file. This is how a PDF reader can jump straight to page 42 without reading the whole file first. It's like a table of contents, but for internal data.

Trailer: the very end of the file. It tells the reader where the xref table starts and which object is the "root" (the entry point for the whole document). A PDF reader always reads the end of the file first, then works backwards.

PDFs Preserve Layout Not Meaning

PDFs preserve layout, typography, and visual intent with incredible precision. That’s why they’ve become the default for everything from research to regulation. You instinctively understand:

What’s a title vs. a heading

How a table is organized

Which caption belongs to which figure

Where a footnote connects

None of this actually exists in a PDF.

The problem is that PDFs encode appearance. They do not encode meaning.

The latent structure you see, the hierarchy, the relationships, the organization, is something your brain reconstructs automatically. Spend five seconds looking at a PDF and you immediately understand it.

But machines don’t have this ability. Machines do not see the inherent meaning behind a formatted document that our human brains do.

What Your AI Actually Sees

While humans see a well-organized document, AI systems “see”:

Text fragments

X/Y coordinates

Font sizes and styles

Disconnected objects

There is no “heading.” No “table.” No “footnote.” Just positioned text.

The AI Paradox

LLMs can provide answers from PDFs. But it also hides how fragile those answers are. You can point AI at a repository of PDFs and get immediate value. Answers sound right. It looks like success. But under the surface

Tables are flattened or misinterpreted

Reading order is guessed

Context is incomplete

Relationships are lost

And because what ChatGPT or other LLMs output sound convincing and fluent, the problems are easy to miss. AI doesn’t fix bad structure, it obfuscates it.

Structure Isn’t Optional Anymore

For years, lack of structure was an inconvenience. Now it’s a liability.

Because AI systems depend on:

Clean segmentation

Reliable hierarchy

Preserved relationships

Consistent metadata

Without this, you don’t just get lower-quality results, you get unverifiable results. And that’s a serious problem.

The Real Risk

The biggest risk is not that AI fails; rather, the serious issue is that it appears to succeed. And once people trust the AI outputs:

Errors propagate

Decisions rely on shaky data

Confidence outpaces reality

The Harsh Reality

The promise of AI suggests you can unlock value from the content you already have. The reality is harsher:

If your content isn’t structured, your AI isn’t reliable, it’s just convincing.

And convincing is not the same as correct. Modern AI is good enough to appear like it understands your content, even when the structure is missing or broken. It will summarize, answer questions, extract insights and it will sound convincing. This all leads to the AI paradox:

The more powerful AI becomes, the easier it is to trust results built on weak foundations.

DCL Helps Untangle This AI Paradox

For decades, DCL has helped organizations from government, publishing, healthcare, legal, and other content-intensive industries transform unstructured, visually formatted documents into semantically rich, structured content. Whether converting PDFs and legacy documents into XML, DITA, or other standards-based formats, DCL structures the knowledge locked in static documents to establish hierarchy, relationships, metadata, and context that AI systems depend on to deliver reliable results. In an era where the stakes of trusting AI outputs have never been higher, DCL is one of the essential solutions for organizations that need their content to be not just convincing, but correct.

No matter your industry or whether you're in document management, AI/ML, legal tech, or enterprise IT, structuring content for AI is one of the most pressing, and underappreciated, issues of our current AI era.

Comments